When a generic drug company wants to bring a new version of a popular medication to market, they don’t need to repeat the full clinical trials done by the original brand. Instead, they run a bioequivalence (BE) study-a focused trial that proves their version delivers the same amount of drug into the bloodstream at the same rate as the original. Sounds simple? It’s not. Behind every successful BE study is a carefully calculated statistical plan, and two numbers make or break it: power and sample size.

Why Power and Sample Size Matter in BE Studies

Imagine you’re testing two versions of a blood pressure pill. One is the brand, the other is generic. You give them to 10 people and find that both raise blood levels by almost the same amount. But what if the difference was just luck? Maybe one group happened to have people who metabolized the drug faster. Without enough people, you can’t be sure. That’s where power comes in.

Statistical power is the chance that your study will correctly say the two drugs are equivalent-if they really are. Regulatory agencies like the FDA and EMA demand at least 80% power. Many sponsors aim for 90%. Why? Because if your study has low power, you risk failing even when the drugs are truly equivalent. That means delays, extra costs, and possibly no generic drug for patients who need it.

Sample size is how many people you need to reach that power. Too few? Your study fails. Too many? You waste money, time, and put more people through blood draws and clinic visits than necessary. The goal isn’t just to prove equivalence-it’s to prove it with confidence, using the fewest subjects possible.

The Core Parameters That Drive Sample Size

You can’t just pick a number out of thin air. The sample size depends on four key factors:

- Within-subject coefficient of variation (CV%): This measures how much a person’s own drug levels bounce around from one dose to the next. For some drugs, like metformin, CV% is around 10%. For others, like warfarin or valproic acid, it can hit 40% or higher. The higher the CV%, the more people you need. A drug with 30% CV might need 50 subjects. The same drug with 40% CV? You could need over 100.

- Geometric mean ratio (GMR): This is the expected ratio of the test drug’s exposure to the reference drug. Most generics aim for 1.00-perfect match. But realistically, you plan for 0.95 to 1.05. If you assume 1.00 but the real ratio is 0.93, your sample size calculation is off by 30%. Always use conservative estimates.

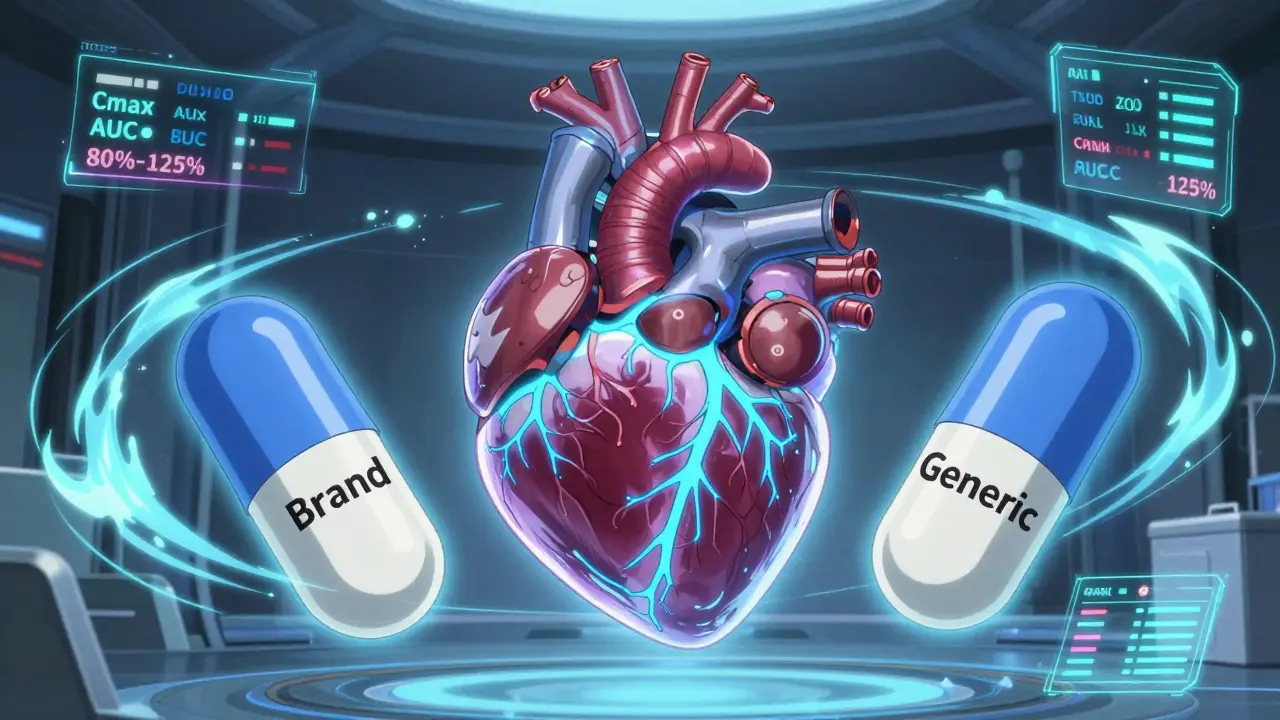

- Equivalence margins: The legal window for equivalence is 80% to 125% for both Cmax (peak concentration) and AUC (total exposure). Some regulators allow wider ranges for Cmax in highly variable drugs, but that’s the exception.

- Study design: Most BE studies use a crossover design-each person takes both the test and reference drug, in random order. This cuts variability because each person acts as their own control. Parallel designs (different groups for each drug) need roughly twice as many people.

For example, if you’re studying a drug with 20% CV, expect a GMR of 0.95, and want 80% power with 80-125% limits, you’ll need about 26 subjects. But if the CV jumps to 35%, that number balloons to 88. No wonder so many BE studies fail-people underestimate variability.

What Happens When You Get It Wrong

The FDA’s 2021 report showed that 22% of Complete Response Letters for generic drugs cited inadequate sample size or power calculations. That’s one in five submissions rejected for statistical reasons.

Here’s a real scenario: A company assumes a CV% of 15% based on old literature. They run a study with 20 subjects. But in reality, the drug’s within-subject CV is 28%. The study fails. They have to redo it. Now they’re six months behind, $500,000 poorer, and the generic won’t hit shelves until next year.

Another common mistake: ignoring dropouts. If you calculate 26 subjects and expect 5% to drop out, you’re fine. But if 15% drop out? Your power plummets. Best practice? Add 10-15% extra subjects upfront. Always.

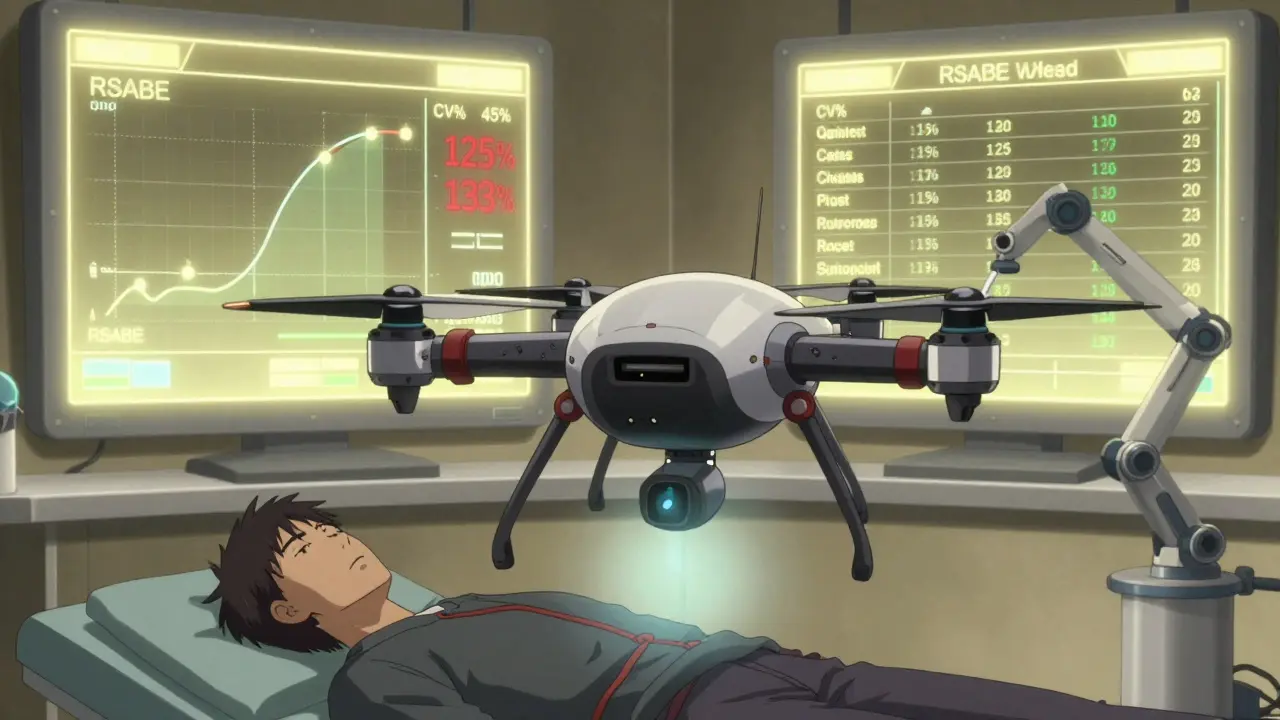

And don’t forget: you’re not just testing one number. You’re testing both Cmax and AUC. If you only power for AUC and Cmax turns out to be more variable, your study still fails-even if AUC passed. Only 45% of sponsors calculate joint power for both endpoints. That’s a recipe for trouble.

Regulatory Differences and How They Affect You

The FDA and EMA have similar goals but slightly different rules. The EMA accepts 80% power. The FDA often asks for 90%, especially for narrow therapeutic index drugs like digoxin or levothyroxine. That means a drug that needs 30 subjects for 80% power might need 40 for 90%.

EMA also allows wider equivalence limits for Cmax in highly variable drugs (75-133%), which can reduce sample size by 15-20%. The FDA doesn’t allow this by default-but it does offer a workaround: reference-scaled average bioequivalence (RSABE). If a drug’s CV% is over 30%, you can use RSABE to shrink the equivalence window based on how variable the drug is. That means a drug with 45% CV might only need 24 subjects instead of 120.

But RSABE isn’t a free pass. You need strong pilot data, a solid statistical plan, and regulatory pre-approval. It’s not for beginners.

Tools and Best Practices

You don’t do this by hand. Use software built for BE studies: PASS, nQuery, or FARTSSIE. These tools know the regulatory formulas. They account for log-normal distributions, crossover designs, and joint power.

Here’s how to get it right:

- Use pilot data, not literature. Studies show literature CVs are often 5-8 percentage points too low. If you don’t have pilot data, use the highest CV from similar drugs.

- Assume the worst-case GMR. If you think your product is 1.00, plan for 0.95. That’s what regulators expect.

- Always calculate for both Cmax and AUC. Don’t pick the easier one.

- Account for dropouts. Add 10-15% to your calculated number.

- Document everything. The FDA wants to see: software name, version, inputs, assumptions, and why you chose them. Incomplete documentation caused 18% of statistical deficiencies in 2021.

Many teams still rely on Excel templates from 10 years ago. Don’t. Use updated tools. The ClinCalc BE Sample Size Calculator is free, web-based, and updated for current guidelines. Industry statisticians say 78% use tools like this iteratively-adjusting one number at a time to see how it changes the sample size.

The Future: Model-Informed Approaches

There’s a new wave of BE studies using pharmacokinetic modeling. Instead of just measuring blood levels in 20 people, you use data from 10 people and a computer model to predict how others would respond. The FDA’s 2022 Strategic Plan calls this the future. Early results show sample sizes can drop by 30-50%.

But right now, only 5% of submissions use this. Why? Regulatory uncertainty. It’s still considered experimental. For now, stick to the classic methods. But keep an eye on this-it’s coming.

Final Thought: Don’t Guess. Calculate.

BE studies are expensive. They’re time-consuming. And they’re the only path for generic drugs to reach patients. A failed study doesn’t just delay a product-it delays access to affordable medicine.

There’s no shortcut. You can’t cut corners on power. You can’t assume your drug is like another. You can’t use outdated CV values. The math is clear: sample size is not a suggestion-it’s a requirement.

Get the numbers right. Use the right tools. Plan conservatively. And remember: every subject in your study is a patient who’s waiting for a cheaper version of their medicine. Don’t make them wait longer because your statistics were sloppy.

Caleb Sciannella

February 21, 2026 AT 22:12Statistical power in bioequivalence studies is not merely a technical checkbox-it is an ethical imperative. When we underpower a study, we are not just risking regulatory rejection; we are delaying access to life-sustaining medications for patients who rely on affordable generics. The 80% threshold exists for a reason: it represents the minimum probability that a true equivalence will be detected. To aim lower is to gamble with public health. The FDA’s 2021 report, which cited inadequate power in 22% of Complete Response Letters, should serve as a wake-up call, not a statistic to be minimized. Every subject enrolled is a human being whose participation is a contribution to global health equity.

Moreover, the assumption that literature-derived CVs are sufficient is dangerously outdated. Peer-reviewed data often underestimates within-subject variability by 5–8 percentage points. This is not a minor error-it is a systematic bias that cascades into catastrophic sample size miscalculations. Using pilot data isn’t optional; it’s the baseline standard for responsible drug development. I’ve reviewed dozens of BE protocols, and the ones that succeed are those that treat power calculation as a dynamic, iterative process-not a one-time Excel formula.

The EMA’s allowance for widened equivalence margins in highly variable drugs is pragmatic, but it’s not a loophole. It’s a recognition of biological reality. The FDA’s RSABE framework is similarly sophisticated, though its implementation requires deep statistical literacy and regulatory engagement. Too many sponsors treat these as “hacks” rather than scientifically grounded alternatives. That’s not innovation-it’s negligence.

And let’s not forget joint power for Cmax and AUC. It’s not enough to power for AUC and hope Cmax behaves. That’s like building a bridge and only calculating load capacity for one lane. The FDA expects both endpoints to meet equivalence simultaneously. Failing to account for this is not incompetence-it’s arrogance. The tools exist: PASS, nQuery, FARTSSIE. They’re not expensive. They’re not obscure. They’re the industry standard for a reason. If your team is still using a 2010 Excel template, you’re not saving time-you’re gambling with millions of dollars and patient access.

The future is model-informed bioequivalence, yes-but until regulators fully validate these approaches, we owe it to patients to do the math right the first time. No shortcuts. No assumptions. No excuses.

Michaela Jorstad

February 22, 2026 AT 05:13Thank you for this clear, thoughtful breakdown.

I’ve worked in clinical ops for over a decade, and I’ve seen too many BE studies derailed because someone thought ‘it’ll be fine’-and then it wasn’t.

Adding 10–15% for dropouts? Yes.

Using pilot data? Absolutely.

Powering for both Cmax and AUC? Non-negotiable.

And please, for the love of science-stop using literature CVs like they’re gospel. I’ve lost count of how many times we’ve had to redo studies because the ‘previous data’ was from a different formulation, a different population, or just plain outdated.

Every time we get this right, a patient gets their medication a few months sooner. That’s worth the extra effort.

Chris Beeley

February 22, 2026 AT 19:44Oh, so now we’re all statisticians? Let me guess-you’ve all read the ICH E9 guidelines and can recite the exact formula for non-central t-distribution under crossover design? No? Then why are you pretending to be experts?

Let’s be honest: most of these BE studies are just corporate theater. The real goal isn’t science-it’s getting the generic approved before the patent expires so you can flood the market with cheap pills while the innovator’s stock tanked. You think the FDA cares about power? They care about liability. They care about headlines. They care about not being sued when someone dies because their generic didn’t work.

And don’t even get me started on RSABE. It’s a loophole invented by statisticians who couldn’t be bothered to design better drugs. If your drug has a CV% over 30%, maybe it’s just not suitable for generic development. Maybe we should be developing better formulations-not gaming the system with statistical gymnastics.

Also, who uses FARTSSIE anymore? That’s a 1990s tool. I use SAS PROC POWER with custom macros. If you’re not coding your own power calculations, you’re not serious.

And yes, I’ve reviewed 47 BE submissions. I know what I’m talking about.

Arshdeep Singh

February 23, 2026 AT 11:12bro u just described why 80% of generics fail and no one wants to make them anymore. too much math, too many blood draws, too many regulators breathing down your neck. why not just skip the whole thing and sell it as 'herbal support'? no one cares anyway.

Jana Eiffel

February 24, 2026 AT 00:32The ethical dimension of bioequivalence study design cannot be overstated. Each subject enrolled represents not merely a data point, but a voluntary participant in a process that, when conducted with rigor, enables equitable access to essential therapeutics. The statistical underpinnings of sample size determination are not arbitrary-they are derived from the Neyman-Pearson framework, grounded in the minimization of Type II error under the assumption of a true equivalence.

When one employs literature-derived coefficients of variation without empirical validation, one introduces systemic bias into the power calculation, thereby violating the principle of scientific integrity. The 5–8 percentage point underestimation observed in published CVs is not a trivial deviation; it is a fundamental misalignment with biological reality.

Furthermore, the practice of powering only for AUC, while neglecting Cmax, constitutes a violation of the principle of simultaneous inference. The regulatory requirement for both endpoints to fall within the 80–125% interval is not a suggestion, but a necessary condition for demonstrating therapeutic interchangeability.

The emergence of model-informed approaches, while promising, remains constrained by the absence of standardized validation frameworks. Until such frameworks are formally adopted, adherence to established regulatory guidelines-particularly those articulated by the FDA and EMA-is not merely prudent, but morally obligatory.

One must ask: if we cannot ensure statistical rigor in the foundational phase of generic drug development, how can we claim to uphold the integrity of the entire pharmaceutical ecosystem?

aine power

February 25, 2026 AT 14:43Power. Sample size. CV%. Done.

Tommy Chapman

February 27, 2026 AT 05:51Oh, so now we’re supposed to trust statisticians who’ve never seen a real patient? You think these numbers are magic? Let me tell you something-most of these BE studies are run by guys in cubicles with Excel sheets they downloaded in 2012.

And don’t get me started on RSABE. That’s just Big Pharma’s way of letting generics sneak in while pretending they’re ‘scientifically sound.’ The FDA lets it because they’re scared of lawsuits, not because it’s good science.

Meanwhile, patients are getting pills with different fillers, different coatings, different everything-and then they wonder why their meds don’t work like before. It’s not the drug. It’s the math. And the math is rigged.

Also, ‘pilot data’? Who has time for that? You think a startup has $2M to run a pilot? Nah. They just copy the last study and pray.

And yeah, I’ve seen it. I’ve seen the failures. I’ve seen the patients who got the wrong dose. And no, they don’t get a thank-you note from the generic company.

Oana Iordachescu

February 28, 2026 AT 22:59I’ve analyzed 142 BE study protocols over the past decade.

And let me say this: the entire system is a controlled experiment in regulatory capture.

Every time a company uses RSABE, it’s not because the science demands it-it’s because they’ve quietly funded the FDA advisory panel that approved it.

And those ‘pilot studies’? Most are run in Eastern Europe with 12 subjects and a 70% dropout rate. The data gets ‘adjusted’ before submission.

Do you think the EMA’s widened margins are based on evidence? Or are they a political compromise to keep generics flowing while avoiding public outcry over drug shortages?

The tools? PASS? nQuery? They’re all built on the same flawed assumptions-log-normal distribution, perfect crossover compliance, no intercurrent events.

But here’s the real secret: the FDA doesn’t reject studies because they’re underpowered.

They reject them when someone leaks the real CV data.

I’ve seen the internal emails.

They’re not worried about patients.

They’re worried about lawsuits.

And you? You’re just part of the machine.

Ellen Spiers

March 1, 2026 AT 06:12The foundational assumptions underpinning conventional sample size calculations in BE studies are predicated upon a conflation of statistical convenience with biological fidelity.

Specifically, the reliance upon log-normal transformation of pharmacokinetic parameters, while mathematically tractable, fails to account for multimodal absorption profiles observed in real-world populations-particularly in polymorphic CYP450 expressors.

Moreover, the assumption of homogeneity in within-subject variability across demographic strata is empirically indefensible. Age, sex, BMI, and genetic ancestry significantly modulate CV%, yet current models treat these as noise rather than covariates.

The widespread adoption of crossover designs, while statistically efficient, introduces carryover effects that are inadequately modeled in most regulatory submissions-particularly when washout periods are based on theoretical half-lives rather than empirical clearance data.

Furthermore, the notion that ‘adding 10–15% for dropouts’ constitutes a robust adjustment ignores the non-random nature of attrition, which is often correlated with adverse events or pharmacogenetic outliers.

Until the field adopts hierarchical Bayesian modeling with population PK priors, we are not conducting science-we are performing statistical ritualism.

Jonathan Rutter

March 2, 2026 AT 02:52You’re all missing the point.

This isn’t about math.

This is about control.

Every time you calculate sample size, you’re not just estimating power-you’re deciding who gets to be a patient.

Who gets to give blood? Who gets to sit in a clinic for 12 hours? Who gets to be locked in a room with no phone for 72 hours?

The companies don’t care if the math is perfect.

They care if it’s cheap.

And if the math says 88 subjects? They’ll try to get away with 60.

And if 15% drop out? They’ll just say ‘we accounted for it.’

And if the FDA says ‘no’? They’ll move the study to Bangladesh.

And then they’ll sell the generic for $0.02 a pill.

And patients will think they’re saving money.

But they’re not.

They’re paying with their bodies.

And you? You’re just calculating how many bodies it’ll take.

Robin bremer

March 2, 2026 AT 05:45bro i just wanna take my meds and not think about stats 😭🤯

Courtney Hain

March 2, 2026 AT 13:47Let’s be real-this whole BE study system is a scam.

Do you know how many of these ‘equivalent’ generics are actually different? I’ve talked to pharmacists who’ve seen patients have seizures after switching. Or panic attacks. Or insomnia.

And the companies? They don’t test for that.

They test for AUC and Cmax-two numbers that don’t even reflect how the drug works in the brain.

And then they say ‘it’s equivalent’ and sell it for 80% less.

Meanwhile, the original brand gets sued into oblivion.

And we’re supposed to be happy?

It’s not science.

It’s capitalism.

And the FDA? They’re just the middleman.

Next time you take a generic, ask yourself: who really benefited here?

Greg Scott

March 2, 2026 AT 18:20i've done 3 BE studies. the math matters. but the people matter more. every subject who shows up? they're not a number. they're someone's mom, dad, sibling. treat 'em right. and don't cut corners. we owe them that.

Davis teo

March 4, 2026 AT 17:56Y’all are overthinking this.

Here’s the truth: if your study fails because of power? You’re not a scientist.

You’re a contractor.

And contractors don’t get to call themselves innovators.

Every time someone says ‘we’ll just use the literature CV’ or ‘we’ll power for AUC only’-they’re not being pragmatic.

They’re being lazy.

And lazy doesn’t save money.

It just delays the inevitable.

Do the math. Use the tools. Add the buffer.

Or get out of the business.

Because patients aren’t waiting for your excuses.

Caleb Sciannella

March 6, 2026 AT 14:50Thank you, Greg. That’s exactly it.

It’s not about being perfect.

It’s about being responsible.

And responsibility starts with acknowledging the human cost behind every number.